Week 13: 29 March 2024

#01 🌧️💻🌍 | accurate flood forecasting.

The evolution of AI-based flood forecasting has transitioned from early experiments with artificial neural networks in the late 1990s to the advanced use of machine learning and IoT technologies, significantly improving prediction accuracy.

In the late 1990s, researchers at the University of Iowa embarked on a pioneering endeavor, exploring the potential of artificial neural networks (ANNs) for flood forecasting in the Raccoon River Basin. This study, published in 1997, marked one of the earliest known applications of AI techniques in the field of hydrological modeling and flood prediction.

Concurrently, in 2000, a team from the National Taiwan University applied ANNs to forecast flooding in the Tanshui River Basin, using historical data on rainfall, river levels, and other relevant variables to train their neural network models. Their work demonstrated improved accuracy compared to traditional hydrological models, further solidifying the potential of AI in this domain.

As the new millennium dawned, researchers at the University of California, Davis, took a different approach. In 2001, they utilized genetic programming (GP), a type of evolutionary algorithm, to develop flood forecasting models for the Russian River Basin in California. The GP-based models outperformed traditional regression-based models, highlighting the versatility of AI techniques in tackling complex hydrological problems.

The year 2004 saw another significant contribution from researchers at the University of Adelaide, Australia. They explored the use of support vector machines (SVMs), a powerful machine learning algorithm, for flood forecasting in the Huai River Basin in China. The SVM-based models demonstrated impressive performance in predicting flood levels and timings, further expanding the repertoire of AI techniques applicable to this field.

In 2005, the National Weather Service (NWS) in the United States took a significant step by incorporating AI techniques, such as ANNs and fuzzy logic, into their hydrological models for operational flood forecasting. This marked one of the earliest adoptions of AI by a national agency for this critical application, setting the stage for more widespread integration of these technologies.

As computational power and data availability increased, the field of AI-based flood forecasting gained momentum. Governments, research institutions, and private companies around the world recognized the potential of these technologies to improve the accuracy of flood predictions, enhance early warning systems, and ultimately save lives and minimize economic losses.

One notable example is the Texas Flood Early Warning System (TexasFloodALERT), which utilizes a network of IoT sensors to monitor river levels, rainfall, and other data, integrating this information with AI-based flood forecasting models to provide timely alerts and warnings.

In India, the National Hydrology Project has deployed a network of IoT-enabled water level sensors and weather stations, providing real-time data to AI-based flood forecasting systems developed by organizations like the Indian Institute of Technology Kharagpur. This initiative aims to improve flood preparedness and response efforts in a country that is highly vulnerable to these natural disasters.

The European Flood Awareness System (EFAS), a collaborative effort among European countries, incorporates data from IoT sensors, satellite observations, and numerical weather predictions to generate AI-based flood forecasts and early warnings, enabling cross-border coordination and response efforts.

In Indonesia, the startup PetaBencana, founded by Nashin Mahtani and Yantisa Akhadi in 2017, has developed a platform that combines IoT sensor data, social media reports, and AI-based flood modeling to provide real-time flood monitoring and forecasting for Jakarta and other cities. This innovative approach leverages crowdsourced information and the power of AI to enhance situational awareness and decision-making during flood events.

As the field progressed, the adoption of AI-based flood forecasting solutions extended beyond government agencies and research institutions. Private companies, ranging from consulting and technology firms to insurance providers, recognized the value of these technologies in mitigating flood risks, improving risk assessment, and developing innovative insurance products.

Insurance companies like Swiss Re, Munich Re, and Aon have developed or partnered with AI solution providers to enhance their flood forecasting capabilities, enabling more accurate risk assessment and pricing models. This has disrupted the insurance market, leading to the development of dynamic pricing models, parametric insurance products, and micro-insurance offerings tailored to specific flood risk profiles.

For instance, in Thailand, insurance companies like Muang Thai Insurance partnered with the AI startup Hydro-Informatics Institute (HII) in 2022 to develop dynamic pricing models based on real-time flood forecasting data. The AI system, developed by HII's founder Dr. Chaiyuth Sukhsri, allows insurers to adjust premiums based on changing risk levels in different regions.

In Malaysia, the insurance company Allianz implemented an AI-powered flood risk monitoring system developed by the startup ERMA in 2021. The system, created by ERMA's co-founders Dr. Lim Choun Sian and Dr. Tan Kee Siong, adjusts premiums dynamically based on forecasted flood risks across the country.

In China, insurance companies like Ping An Insurance integrated AI-based flood forecasting with satellite imagery and drones in 2020 to rapidly assess flood-affected areas and expedite claims processing. This initiative was led by Ping An's Chief Innovation Officer, Jonathan Larsen, and leveraged AI models developed by the company's in-house AI research team.

In Brazil, the insurance company SulAmérica developed an AI-powered platform in 2019 that automatically identifies and processes flood-related claims based on real-time data from weather sensors and hydrological models. The platform was created in collaboration with the AI startup Opinaia, founded by Dr. Rafael Iglesias and Dr. Marcelo Fernandes.

In South Africa, the insurance company Santam launched an AI-based flood forecasting platform in 2022, developed in partnership with the University of Cape Town's African Institute for Mathematical Sciences (AIMS). The platform provides policyholders with early warnings and recommendations for preventive measures, such as sandbagging or temporary flood barriers, based on AI-powered flood predictions.

In Vietnam, the insurance company Bao Viet partnered with local authorities and the AI company Pirvate in 2021 to develop a flood forecasting system that triggers alerts and evacuation plans for policyholders in high-risk areas. The system was developed by Pirvate's founder, Dr. Nguyen Thi Thanh Nhan, and utilizes AI models trained on historical flood data.

In Bangladesh, the insurance company Green Delta introduced a parametric flood insurance product in 2023, developed in collaboration with the AI startup Floodrik. The product automatically triggers payouts to policyholders based on predefined flood conditions, as determined by Floodrik's AI-based flood forecasting models created by its founder, Dr. Farzana Rahman.

In Kenya, the insurance company Lami launched a micro-insurance product in 2021, providing affordable flood coverage to low-income households. The premiums and payouts are calculated using AI-based flood risk assessment models developed by the startup Cloud to Street, founded by Bessie Schwarz and Michiel Hertgers.

However, the advancement of AI-based flood forecasting has not been without challenges. Concerns over data privacy, algorithmic bias, and the need for transparency and explainability have emerged as critical considerations. To address these issues, researchers and developers have explored techniques such as distributed AI, federated learning, and explainable AI.

Distributed AI and federated learning approaches allow multiple organizations or regions to collaboratively train AI models on their local data while preserving privacy and without the need to share raw data. This has facilitated cross-border collaboration and enabled the development of more comprehensive and accurate flood forecasting systems.

Meanwhile, explainable AI techniques aim to provide stakeholders with insights into the underlying reasoning and justifications behind the AI models' predictions. This transparency fosters trust and enables more informed decision-making by authorities, emergency responders, and affected communities.

As the field continues to evolve, researchers and developers are exploring hybrid models that combine different AI techniques, such as machine learning, deep learning, and physical models, as well as ensemble forecasting methods that leverage the strengths of multiple models to improve overall accuracy and robustness.

Additionally, the integration of AI-based flood forecasting with emerging technologies like 6G networks is on the horizon. As 6G networks reach deployment maturity, expected around 2032-2035, they will enable real-time transmission of high-resolution data from IoT sensors, drones, and satellites, facilitating more accurate and timely flood predictions, even in remote or resource-constrained areas. Looking ahead, the next decade promises to be a transformative period for AI-based flood forecasting:

From 2023 to 2026, the adoption of edge computing and edge AI will enable real-time data processing and decision-making at the edge, reducing latency and improving the responsiveness of flood forecasting systems.

Between 2024 and 2028, the integration of diverse data sources, such as social media, crowdsourced information, aerial imagery from drones, and high-resolution satellite data, will provide a more comprehensive understanding of flood situations, leading to improved forecasting accuracy and situational awareness.

From 2025 to 2029, the emphasis will be on explainable AI and trustworthiness, ensuring that stakeholders understand the underlying reasoning behind the AI models' predictions and fostering trust in these critical decision-making tools.

In the 2026-2030 timeframe, distributed AI and federated learning approaches will gain traction, addressing data privacy concerns and enabling collaborative model development across organizations and regions.

Advances in deep learning and transfer learning techniques will continue between 2027 and 2031, leading to more accurate and efficient flood forecasting models that can be adapted to new regions or scenarios with reduced data collection and training efforts.

From 2028 to 2032, the trend will shift towards hybrid models and ensemble forecasting, combining different AI techniques and leveraging the strengths of multiple models to improve overall accuracy and robustness of flood predictions.

Between 2029 and 2033, there will be an increased emphasis on collaboration and data sharing among government agencies, research institutions, and private companies at both national and international levels. This will facilitate the development of more comprehensive and accurate flood forecasting systems, benefiting communities at risk across borders.

As the decade progresses, from 2030 to 2034, ethical considerations and fairness will take center stage, ensuring that AI-based flood forecasting systems do not perpetuate biases or disadvantage certain communities, and that their decision-making processes are transparent and equitable, particularly in the context of cross-border flood events.

Finally, as 6G networks reach deployment maturity from 2032 to 2035, AI-based flood forecasting systems will leverage the advanced capabilities of these networks, such as ultra-high bandwidth, ultra-low latency, and massive connectivity. This will enable real-time transmission of high-resolution data from IoT sensors, drones, and satellites, further enhancing the accuracy and timeliness of flood predictions, especially in remote or resource-constrained areas.

The journey of AI-based flood forecasting, from its humble beginnings in the late 1990s to the transformative potential it holds for the future, is a testament to the power of innovation, collaboration, and technological advancement. As the world grapples with the increasing frequency and severity of flood events, driven by factors such as urbanization and climate change, these technologies offer a glimmer of hope, empowering communities, governments, and organizations to better prepare, respond, and mitigate the devastating impacts of these natural disasters.

Through a global collective effort, involving researchers, developers, policymakers, and stakeholders from diverse backgrounds and regions, AI-based flood forecasting has the potential to become a cornerstone of resilience, safeguarding lives, livelihoods, and ecosystems worldwide.

#02 🏠🧠💡 | memory care centers.

Memory Care Design is a specialized area of architecture and environmental design that has gained increasing significance and urgency in recent years due to the global phenomenon of population aging and the rise in the prevalence of Alzheimer's disease, dementia, and other cognitive impairments. This field has evolved significantly over the past few decades, driven by advancements in research, changing care philosophies, and a growing understanding of the profound impact that the built environment can have on the well-being and quality of life of those with cognitive impairments.

According to the World Health Organization (WHO), the global population aged 60 years and older is expected to nearly double from 12% to 22% between 2015 and 2050. This demographic shift is particularly pronounced in many non-Western countries, where population aging is occurring at an unprecedented rate. For example, in China, the number of people aged 60 and above is projected to increase from 241 million in 2020 to 487 million by 2050, accounting for nearly 35% of the country's total population (United Nations, 2019).

As populations age, the prevalence of age-related cognitive impairments, such as Alzheimer's disease and other forms of dementia, is also expected to rise dramatically. According to the Alzheimer's Disease International (ADI), in 2015, there were an estimated 46.8 million people worldwide living with dementia, with this number projected to increase to 131.5 million by 2050 (ADI, 2015).

This global demographic shift and the increasing burden of cognitive impairments have significant implications for healthcare systems, social services, and the built environment. Addressing the growing demand for specialized memory care facilities that prioritize the well-being, safety, and dignity of individuals with cognitive impairments has become a critical imperative for communities and economies around the world.

While the principles and themes of memory care design have their roots in Western research and practices, the application of these principles has become increasingly global, with innovative approaches and examples emerging from non-Western regions.

Japan:

As one of the world's most rapidly aging societies, Japan has been at the forefront of developing innovative memory care solutions. The "Group Home" model, which emphasizes small-scale, homelike environments with a focus on personalized care and resident autonomy, has gained popularity in Japan. These group homes typically accommodate between 5 to 10 residents and are designed to foster a sense of familiarity and community.

One notable example is the Kamikyo Group Home in Tokyo, which features a circular layout with a central courtyard, allowing residents to move freely and engage with nature while maintaining a secure environment. The design incorporates familiar elements such as traditional Japanese gardens and residential-style furnishings to promote a sense of comfort and familiarity.Singapore:

With a rapidly aging population and a high prevalence of dementia, Singapore has prioritized the development of memory care facilities and specialized dementia care services. The Khoo Teck Puat Hospital's Dementia Care facility, completed in 2010, is a pioneering example of memory care design in the region.

The facility incorporates principles such as circular paths, visible destinations, and recognizable landmarks to aid in wayfinding and reduce disorientation. It also features dedicated activity areas, therapeutic gardens, and sensory-stimulating elements like water features and artwork to promote engagement and social interaction among residents.China:

As China's population ages rapidly, the demand for memory care facilities is expected to surge. In response, several innovative memory care communities have emerged, incorporating traditional Chinese design elements and principles.

The Yue Xiu Dementia Care Center in Guangzhou, completed in 2019, is a prime example of this approach. The center features a courtyard-style layout inspired by traditional Chinese architecture, with recognizable landmarks and familiar elements such as gardens, water features, and residential-style furnishings. The design aims to create a sense of familiarity and promote a calming, therapeutic environment for residents.South Africa:

In South Africa, where the prevalence of dementia is expected to increase significantly in the coming decades, several memory care facilities have been designed to address the unique cultural and social needs of the local population.

The Livewell Village in Johannesburg, completed in 2018, is a purpose-built memory care community that incorporates elements of traditional African architecture and design principles. The village features circular paths, outdoor spaces, and recognizable landmarks to aid in wayfinding, while also providing opportunities for social engagement and community activities.

As memory care design principles are adopted and adapted across diverse cultural and regional contexts, ongoing research and collaboration among architects, healthcare professionals, and experts in dementia care are essential. Researchers and practitioners in non-Western regions are actively contributing to the body of knowledge in this field, providing valuable insights and data on the unique needs and preferences of their respective populations.

For example, a study conducted in Malaysia by researchers at the Universiti Teknologi MARA explored the design preferences of Malaysian caregivers and individuals with dementia, highlighting the importance of incorporating familiar cultural elements and promoting social interaction in memory care environments (Zairul & Sharifah, 2018).

Similarly, a study by researchers at the University of Hong Kong examined the impact of environmental design on the well-being and behavior of individuals with dementia in Chinese nursing homes, emphasizing the need for personalized and culturally relevant design solutions (Cheng et al., 2020).

As the global population continues to age and the prevalence of cognitive impairments rises, the demand for specialized memory care facilities is expected to increase exponentially across both developed and developing economies. This demand is driven not only by demographic shifts but also by a growing recognition of the importance of creating environments that prioritize the well-being, dignity, and quality of life of individuals with cognitive impairments.

According to a report by the Alzheimer's Disease International (ADI), the global cost of dementia is estimated to reach $1 trillion by 2030, highlighting the significant economic burden associated with cognitive impairments (ADI, 2015). Investing in the design and development of specialized memory care facilities can not only improve the quality of life for those affected but also potentially alleviate some of the economic and social burdens associated with dementia care.

Furthermore, as societies around the world strive to promote inclusive and age-friendly communities, the principles of memory care design are increasingly being integrated into broader urban planning and design strategies. Concepts such as dementia-friendly communities, which prioritize accessible and supportive environments for individuals with cognitive impairments, are gaining traction globally.

Memory care design has evolved into a vital and rapidly expanding field that addresses a pressing global imperative. As populations age and the prevalence of cognitive impairments rises, the demand for specialized memory care facilities that prioritize the well-being, safety, and dignity of individuals with conditions like Alzheimer's disease and dementia will continue to grow. While the principles and themes of memory care design have their roots in Western research and practices, the application of these principles has become increasingly global, with innovative approaches and examples emerging from diverse cultural and regional contexts. Ongoing research, collaboration, and a commitment to creating environments that prioritize the unique needs and preferences of individuals with cognitive impairments will remain essential as this field continues to evolve and adapt to the changing global landscape.

#03 🌐✊🔗 | the fediverse.

The Fediverse is a collective term that encompasses a constellation of decentralized social networks and platforms that challenge the centralized models championed by the tech giants that have dominated the digital realm for decades. To fully grasp the significance of the Fediverse, it is imperative to trace the historical pendulum swing between centralization and decentralization, a narrative that has profoundly shaped the trajectory of computing and the internet since their inception.

The early days of computing were characterized by a centralized paradigm, where mainframe computers reigned supreme. These imposing monoliths, manufactured by industry titans such as IBM (International Business Machines Corporation, founded in 1911 in New York), Burroughs (founded in 1886 in Detroit), Univac (Universal Automatic Computer, a division of Remington Rand, established in 1946 in Pennsylvania), and Control Data Corporation (founded in 1957 in Minnesota), dominated the computing landscape of the 1960s and 1970s. Access to these powerful systems was heavily centralized, with corporations, universities, and government agencies owning and operating them as isolated computing hubs. The acquisition, operation, and maintenance of these mainframes were expensive endeavors, requiring specialized personnel and infrastructure, further solidifying the centralized nature of computing during this era.

However, the 1970s and 1980s ushered in a seismic shift as personal computers (PCs) emerged, democratizing computing power and bringing it directly into the hands of individuals and businesses. This decentralization of technology was spearheaded by pioneering companies such as Apple, with the iconic Apple II (introduced in 1977 by Steve Wozniak and Steve Jobs in Cupertino, California), IBM with the groundbreaking IBM PC (launched in 1981 in New York), and Commodore with the wildly popular Commodore 64 (released in 1982 in Pennsylvania). These personal computing devices empowered users, fostering innovation and enabling a thriving ecosystem of software development and experimentation across diverse sectors and communities globally.

Parallel to the personal computing revolution, another seismic shift was unfolding – the advent of the Internet. Originally conceived as ARPANET (Advanced Research Projects Agency Network), a research project funded by the U.S. Department of Defense in the 1960s, the Internet evolved into a vast, decentralized network of networks that transcended geographical boundaries. Key innovations like the TCP/IP protocol (Transmission Control Protocol/Internet Protocol, developed by Vint Cerf and Bob Kahn in the 1970s), HTTP (Hypertext Transfer Protocol, created by Tim Berners-Lee in 1989), and HTML (Hypertext Markup Language, also developed by Tim Berners-Lee in 1990) laid the foundation for the World Wide Web. This decentralized architecture, built on open standards and protocols, fostered innovation on an unprecedented scale and democratized access to information globally, breaking down barriers and empowering individuals and communities worldwide.

Despite the decentralized origins of the internet, the early 2000s witnessed a wave of centralization with the rise of Web 2.0 platforms and cloud computing services. Companies like Google (founded in 1998 by Larry Page and Sergey Brin in California), Facebook (launched in 2004 by Mark Zuckerberg and co-founders in Massachusetts), Amazon Web Services (AWS, introduced in 2006 by Amazon.com, founded in 1994 by Jeff Bezos in Washington), and Microsoft Azure (released in 2010 by Microsoft Corporation, established in 1975 by Bill Gates and Paul Allen in New Mexico) emerged as dominant players. These platforms and services offered centralized solutions, leveraging user-generated content and providing convenience and scalability through cloud computing. However, this centralization raised concerns about data privacy, security, and the potential for monopolistic practices, as these companies amassed vast troves of user data and wielded significant influence over the digital landscape.

The pendulum swung back towards decentralization with the advent of blockchain technology and cryptocurrencies like Bitcoin, introduced in 2008 by the pseudonymous Satoshi Nakamoto (whose true identity remains unknown). Blockchain offered a decentralized, transparent, and secure way to record transactions and data, challenging the centralized models of traditional financial institutions. This pioneering technology reignited the decentralization narrative and paved the way for a resurgence of decentralized technologies, including peer-to-peer networks and distributed storage solutions. Initiatives like the Interplanetary File System (IPFS, created in 2015 by Juan Benet), a decentralized storage and distribution protocol, and projects like Filecoin (launched in 2017 by Protocol Labs) further advanced the cause of decentralized data storage and content distribution.

Amidst this resurgence of decentralization, the Fediverse emerged as a collective term for decentralized social networks and platforms that embrace open standards and protocols. Unlike traditional social media platforms like Facebook, Twitter (launched in 2006 by Jack Dorsey, Noah Glass, Biz Stone, and Evan Williams in California), and Instagram (acquired by Facebook in 2012, originally created by Kevin Systrom and Mike Krieger in 2010 in California), which are centralized and controlled by a single entity, the Fediverse is a federated ecosystem. In this ecosystem, individual servers or instances can communicate and interact with each other, forming a decentralized network.

One of the most prominent examples of the Fediverse is Mastodon, a decentralized microblogging platform that offers a Twitter-like experience but without the centralized control. Mastodon was created in 2016 by Eugen Rochko, a German software developer based in Germany. Users can join different instances or "servers" hosted by individuals, organizations, or communities, each with its own rules, moderation policies, and content guidelines. These instances can communicate and share content with each other, creating a decentralized social network that promotes user autonomy and privacy.

Beyond Mastodon, the Fediverse encompasses a diverse array of decentralized platforms, each serving a specific purpose. PeerTube, for instance, is a decentralized video hosting platform that allows users to upload, share, and watch videos without relying on centralized platforms like YouTube (owned by Google since 2006). PeerTube was created in 2018 by Framasoft, a French non-profit organization dedicated to promoting free and open-source software. Pixelfed, on the other hand, is a decentralized photo-sharing platform, offering an alternative to centralized services like Instagram. Pixelfed was launched in 2018 by Daniel Suprin, a software developer based in Malaysia.

The underlying philosophy of the Fediverse is rooted in the principles of openness, interoperability, and user control. By leveraging open standards and protocols like ActivityPub (a decentralized social networking protocol developed by the World Wide Web Consortium in 2018), the Fediverse enables seamless communication and content sharing across different instances and platforms. This interoperability empowers users to choose the instances and platforms that align with their values and preferences while maintaining the ability to interact with users on other instances, fostering a diverse and inclusive digital community.

The Fediverse represents a paradigm shift in how we perceive and interact with social media and online platforms. Instead of relying on centralized entities that control and monetize user data, the Fediverse offers a decentralized alternative where users can regain control over their data and online experiences. This decentralized approach mitigates the risks associated with centralized platforms, such as data breaches (like the Cambridge Analytica scandal that rocked Facebook in 2018), censorship (a contentious issue faced by platforms like Twitter and YouTube), and algorithmic manipulation (a concern raised by critics of social media giants' content recommendation systems).

Looking to the Future: Trends and Possibilities

As the Fediverse continues to gain traction, several trends and possibilities emerge, shaping the future of decentralized social networks and platforms:

Seamless Cross-Platform Integration:

While the Fediverse currently encompasses various platforms catering to specific needs (e.g., microblogging, video hosting, photo-sharing), the future may bring greater integration and interoperability across these platforms. Users could seamlessly share and consume content from different decentralized platforms, fostering a more cohesive and interconnected decentralized ecosystem. Initiatives like the W3C Social Web Working Group, established in 2021 by the World Wide Web Consortium (W3C), aim to develop standards and protocols that enable this cross-platform integration within the Fediverse.Decentralized Identity and Data Sovereignty:

The Fediverse aligns with the broader movement towards decentralized identity and data sovereignty. Initiatives like self-sovereign identity (SSI), which empowers individuals to control their digital identities and personal data, and decentralized identifiers (DIDs), a standardized way of representing digital identities on decentralized networks, could be integrated into the Fediverse. Projects like Sovrin (launched in 2016 by the Sovrin Foundation) and uPort (created in 2016 by ConsenSys, a blockchain software company) are pioneering efforts in the realm of decentralized identity solutions.Decentralized Monetization Models:

While the Fediverse currently operates on a non-commercial basis, there is potential for exploring decentralized monetization models that align with the principles of user autonomy and privacy. Blockchain-based solutions, such as decentralized autonomous organizations (DAOs) and tokenized ecosystems, could provide alternative revenue streams for content creators and platform developers. Projects like Steem (launched in 2016 by Steemit Inc.), a blockchain-based social media platform that rewards users with cryptocurrency for creating and curating content, and Brave (introduced in 2015 by Brendan Eich, co-founder of Mozilla), a privacy-focused web browser with a built-in cryptocurrency reward system, are examples of decentralized monetization models in the digital realm.Regulatory Challenges and Governance:

As the Fediverse gains mainstream adoption, regulatory challenges and governance models will need to be addressed. Questions around content moderation, legal liability, and user privacy will require thoughtful consideration and collaborative efforts to ensure the Fediverse remains a safe and responsible space while preserving its decentralized ethos. In the European Union, the Digital Services Act (DSA), proposed in 2020 and approved in 2022, aims to regulate online platforms and address issues like content moderation and user protection, which could have implications for the Fediverse. Similarly, in the United States, the ongoing debates around Section 230 of the Communications Decency Act (passed in 1996) and its potential reform could impact the legal landscape for decentralized platforms.Decentralized Application Ecosystem:

Beyond social networking, the Fediverse could pave the way for a broader ecosystem of decentralized applications (DApps) built on open standards and protocols. This could encompass decentralized productivity tools, collaborative platforms, and other innovative applications that leverage the power of decentralized networks. Projects like Arweave (launched in 2018), a decentralized storage and applications platform, and Ethereum (introduced in 2015 by Vitalik Buterin), a decentralized computing platform that supports DApps, are examples of technologies that could potentially integrate with or inspire decentralized applications within the Fediverse ecosystem.

The Fediverse represents a profound shift in how we perceive and interact with digital platforms, challenging the centralized models that have dominated the tech landscape for decades. By embracing decentralization, openness, and user autonomy, the Fediverse offers a glimpse into a future where individuals reclaim control over their online experiences, data, and digital identities. As this movement gains momentum across the globe, it has the potential to reshape the dynamics of power, privacy, and innovation in the digital realm, ushering in a new era of decentralized collaboration and empowerment that transcends borders and fosters inclusivity within the global digital community.

#04 🚧🌱⚡ | battery-powered cranes.

The Netherlands' drive to electrify and decarbonize the construction sector positions it to be a global leader in an industry undergoing a transformative shift towards sustainability against the backdrop of massive projected growth worldwide.

The global construction market is enormous - valued at $11.4 trillion in 2022 and expected to reach $16.6 trillion by 2030 according to Research and Markets. This growth is fueled by increasing urbanization, population growth concentrated in cities, and infrastructure investment in developing economies.

However, the environmental impact is staggering. The International Energy Agency reports that the construction industry accounts for 36% of global final energy consumption and 37% of energy-related CO2 emissions when factoring in manufacturing of materials like steel and cement. On-site activities like operating diesel equipment also contribute significantly to emissions, air pollution, and noise.

There is now increasing pressure worldwide from both regulatory bodies and activists to dramatically reduce construction's carbon footprint. The EU's Renovation Wave strategy aims to double annual energy renovation rates for buildings by 2030. The state of California has mandated reducing emissions 40% below 1990 levels by 2030 across all sectors including construction.

This creates a massive market opportunity for innovative solutions that enable truly low-emission, sustainable construction practices - estimated to be over $420 billion per year by 2025 according to the World Economic Forum. Renewable energy, green building materials and electrification of equipment have been identified as key pillars.

The Netherlands has been aggressively positioning itself at the forefront through a multi-pronged strategy:

Policy & Investment:

Binding emissions reduction targets, tax incentives and over €60 million in direct government innovation funding for companies developing electric construction equipment like excavators, cranes, cement mixers.Test Environments:

Cities are designating zero-emissions construction zones where only electric/renewable equipment is allowed - providing critical real-world test beds. Rotterdam and Amsterdam are leading examples.Manufacturing Ecosystem:

Long-established equipment suppliers headquartered in the Netherlands like Pon Equipment, Huisman and Volvo CE are now introducing fully-electric product lines designed specifically for emissions-free job sites.Skills Development:

Partnerships between industry, vocational schools and universities (TU Delft, Fontys) are developing curricula for electric equipment operations, maintenance and manufacturing.Policy Leadership:

Ministers like Dilian Tusk are advocating for the Netherlands to be a "sustainable construction pioneer" and knowledge exporter through initiatives like the EU's Renovate2Recover program.

With strengths like a dense urban environment acting as a living lab, availability of renewable energy sources, concentration of major equipment companies and top technical universities - the Netherlands has the ingredients to be an innovation hub.

If successful, Dutch firms will be well-positioned to export electrified equipment, sustainability consulting and training globally as regulations tighten to limit emissions. The domestic industry could retain a significant competitive advantage, job creation and influence in this rapidly expanding sector for decades.

The Netherlands is making a strategic, multi-sector bet that by transforming one of the most polluting industries into a model of sustainability, it can capture export value while elevating its position as a progressive climate leader driving the global transition to low-carbon construction practices at scale.

#05 🚫🔓🚨 | flipper zero ban & controversies.

The technological landscape's perpetual evolution has catalyzed an inexorable expansion of the attack surfaces pervading every facet of modern digital life. As our world becomes increasingly interconnected through the proliferation of cyber-physical systems, Internet of Things deployments, and the convergence of operational technologies with traditional IT environments, new vulnerability domains are emerging at an unprecedented pace. Notably, the realm of wireless communications has rapidly expanded to encompass a constellation of short-range digital radio protocols and frequencies now ubiquitous across consumer devices, vehicles, industrial equipment, and critical infrastructure control systems. From Bluetooth and Zigbee to Z-Wave, LoRa, proprietary ISM radios, and digital access control implementations, this slice of the attack surface has ballooned ahead of adequate defensive hardening and rigorous penetration testing - until a vanguard of innovators embraced the ethical hacking mindset.

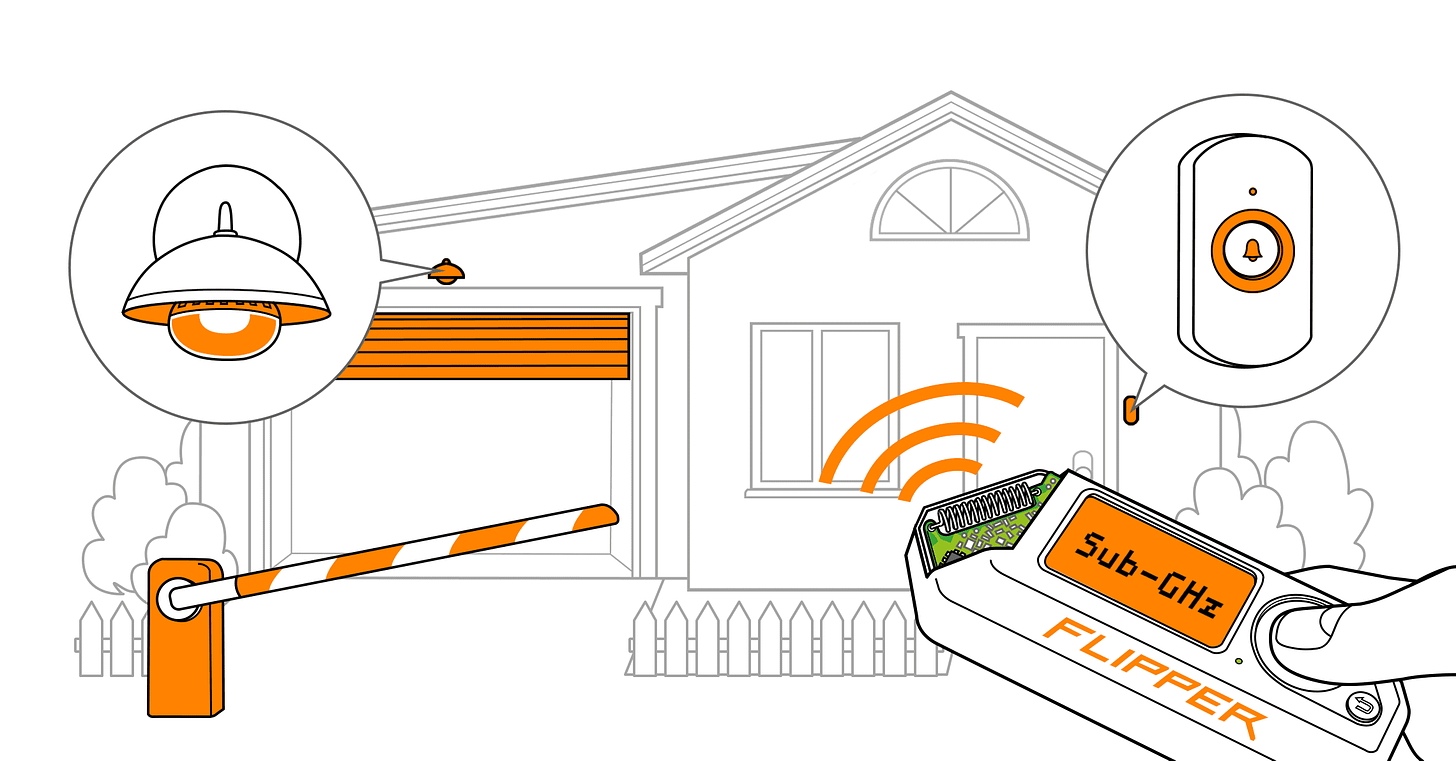

Embodying this pioneering ethos, a seemingly innocuous device dubbed the Flipper Zero has crystalized as a revolution catalyzing more robust wireless security research and vulnerability remediation. Developed through a crowdsourced funding initiative, this compact, handheld, open-source hardware platform concentrates an unprecedented array of offensive radio frequency analysis and manipulation capabilities into an affordable, integrated package precisely tailored for legitimate penetration testing and coordinated vulnerability research undertakings. The Flipper Zero has rapidly cultivated an engaged global community of technologists and makers who continually expand its functionalities through innovative projects like the "Mayhem Hat" expansion board integrating additional wireless radios and a camera module. This ecosystem has enabled imaginative applications beyond the device's original security research intentions, with enthusiasts harnessing Flipper Zero as an multi-factor authentication token, digital camera for 1-bit photography experiments, and even as an actual physical key.

At its core, the Flipper Zero establishes a comprehensive software-defined radio workbench, seamlessly transmitting and receiving across the complete 300MHz to 928MHz frequency spectrum to enable exhaustive mapping and interrogation of the wireless attack surface. The consolidated offensive testing capabilities meticulously integrated into its hardware and firmware components strategically align to illuminate this domain's systemic weaknesses through rigorous analysis and simulated real-world exploitation scenarios. Passive acquisition, demodulation, and decode of arbitrary wireless signal sources facilitate preliminary reconnaissance and protocol analysis across target environments. Leveraging this detected intelligence, subsequent testing vectors include unauthorized bypassing of access control system authentication through wireless command injection and credential cloning, wireless data extraction through sniffing and man-in-the-middle interventions, as well as direct radio-based disruptions and sensor manipulation achieved via strategic RF interference, noise injection, and signal spoofing techniques. Furthermore, GPIO hardware interfaces enable direct chip-off exploits against wireless microcontrollers, allowing researchers to exfiltrate and reverse engineer proprietary firmware to comprehensively map embedded vulnerabilities. This offensive testing arsenal positions the Flipper Zero as a pivotal force multiplier for uncovering entire classifications of security vulnerabilities that conventional penetration testing methodologies chronically underexplored.

However, the disruptive potential of the Flipper Zero's consolidated wireless hacking capabilities has sparked contentious debates and reactionary regulatory actions across public and private sectors. The open-source firmware has already birthed malicious customized builds like "Xtreme" enabling indiscriminate Bluetooth attack functionality against Android and Windows devices. While these activities clearly pervert the ethical principles driving the platform's original development, they underscore the challenges in curtailing potential exploits once innovations are openly disseminated across the internet. Adversaries have already begun targeting the security research community itself through phishing campaigns leveraging interest in the Flipper Zero in attempts to steal credentials and cryptocurrency holdings. Fake websites advertising free devices have proliferated as scams to deliver malicious browser extensions.

Concerned over a surge in vehicle theft incidents allegedly involving Flipper Zero capabilities, the Canadian government pursued regulations to prohibit importation and possession of the device entirely within its borders starting in February 2024. Significant e-commerce players like Amazon have already instituted blanket bans on the Flipper Zero's commercial distribution through their platforms, conflating its flexible wireless analysis toolkit with dedicated access control skimming devices explicitly designed for criminal applications. Brazilian authorities have actively seized inbound international shipments of the hardware to their nation over similar anxieties surrounding the security implications of the technology's domestic availability.

While specific anecdotal theft incidents catalyzed these forceful prohibitions, the reactionary measures underestimate the profound positive impacts the Flipper Zero has catalyzed by elevating wireless security research from a fringe specialization into a high-priority consideration for both offensive and defensive entities. Pioneering research leveraging the platform's capabilities has uncovered disruptive systemic vulnerabilities spanning aviation asset tracking and logistics solutions, automotive control systems, energy grid infrastructure, healthcare Internet of Things deployments, industrial automation systems, and enterprise-class access control implementations. Each of these environments suffers from deeply rooted shortcomings in validating the resilience of wireless signal authentication, authorization, encryption, and non-repudiation safeguards - systemic weaknesses that could enable catastrophic disruptions or intellectual property exfiltrations if undetected until repeated exploitation manifests real-world consequences.

For example, collaborative research between multiple leading academic institutions leveraged Flipper Zero's unparalleled RF analysis functionality to expose a pervasive lack of encryption, authentication, and access control mechanisms across wireless asset tracking protocols underpinning the entire aviation industry's logistics and inventory management solutions. Without systematic defenses, malicious actors could potentially monitor or manipulate the wireless authorizations managing maintenance components, air freight, or flight-line equipment in already high-risk operating environments.

Elsewhere, a telecommunications security firm's internal penetration test at a prominent US-based manufacturing plant uncovered that the wireless protocols controlling the facility's industrial automation systems and robotic assembly lines failed to implement even rudimentary authentication or encryption safeguards. Using the Flipper Zero's capabilities to wirelessly capture firmware from the industrial control components and subsequently reverse engineer the proprietary ISM radio implementations, the assessment team successfully demonstrated the ability to exfiltrate sensitive intellectual property while also achieving remote unauthorized command execution to actively disrupt production operations.

In the transportation sector, a comprehensive vulnerability research initiative isolated multiple zero-day deficiencies across 25 vehicle models from leading manufacturers - all stemming from weaknesses in authenticating the proprietary passive keyless entry systems, remote vehicle immobilizers, and tire pressure monitoring system radios governing modern automotive wireless functionality. Leveraging Flipper Zero's RF transmission capabilities, researchers definitively proved paths to bypass authorized driver authentication, remotely unlock vehicle entrances, and even start engines without legitimate credentials by reverse engineering and replaying proprietary radio commands.

The concerning realities illuminated by these "breach and expose" exercises accentuate the imperative of ethically cultivating offensive wireless security research capacities rather than suppressing or prohibiting the innovators and tools that catalyze these vulnerability discoveries. Without concerted efforts to validate and fortify systemic weaknesses across wireless attack surfaces, digitally enabled aviation, production, transportation, energy distribution, and healthcare infrastructures remain unnecessarily vulnerable. Malicious actors undeterred by legal restrictions will inevitably identify and covertly weaponize these same vulnerabilities - but in that scenario, society is caught unaware until the consequences of repeated exploitation manifest catastrophically. Only by fostering principled coordinated research initiatives that embrace the ethical hacking mindset can defensive mitigations keep pace and harden ahead of cyber offensives.

The moral and ethical implications inherent in concentrating such formidable offensive cyber capabilities into a unified, accessible hardware solution like Flipper Zero are profound and have ignited contentious discourse across the global security community. Critics fixate on the platform's potential for nefarious misuse or criminal exploitation, envisioning doomsday scenarios where malicious actors could leverage it to wreak havoc by breaching access control systems, hijacking wireless device communications, or actively disrupting critical infrastructure dependencies. These detractors advocate for constrictive governmental regulations or outright bans on technologies like the Flipper Zero under the guiding principle that deliberately arming society with powerful offensive cyber tools invariably undermines the defensive resilience efforts the tools purport to reinforce.

However, this myopic mentality fundamentally underestimates the generative capacity of the ethical hacking community and the robust governance frameworks that can strategically harness offensive innovation as a catalyst for fortifying societal cyber resilience. History has borne witness to the perpetual adversarial dynamics underpinning the never-ending battle between offensive actors and defensive entities across the digital security domain. Each seminal innovation or newly uncovered class of vulnerabilities fuels reciprocal advancements and counteractive mitigation techniques in rapid succession, continually elevating the defensive posture in a virtuous cycle. Suppressing cutting-edge offensive security research tools from reaching the hands of the white hat community distorts this balance, ceding an asymmetric strategic advantage to criminally-motivated actors operating in unconstrained environments while erecting artificial barriers to legitimate vulnerability identification and remediation.

The proponents of innovations like Flipper Zero actively recognize these principles, championing rigorous coordinated disclosure regimes and multi-stakeholder remediation workflows to ensure any high-impact findings systematically exhaust reasonable mitigation pathways before any vulnerability particulars become public knowledge. Comprehensive policies and governance controls mandate vulnerability intake protocols, standardized security advisory publication procedures, and archiving processes aligned with established international best practices. Crucially, research institutions, private firms, and government agencies spearheading trailblazing wireless security initiatives must attain accredited "star" designations grounded in stringent evaluations encompassing technical acumen, robust operational security capabilities, transparent oversight and governance mechanisms, and a demonstrable institutional track record of impeccable coordinated disclosure ethos. These foundational tenets collectively forge a principled framework where offensive cyber capabilities strategically enable more resilient defensive architectures for the greater public benefit.

Numerous high-profile multi-disciplinary research initiatives have already illuminated the pivotal contributions tools like the Flipper Zero can catalyze across mission-critical environments historically overlooked for wireless attack surface validation. Researchers from preeminent institutions have systematically uncovered disruptive vulnerabilities spanning energy distribution infrastructure, avionics environments, automotive control systems, healthcare Internet of Things deployments, and industrial automation deployments - each intrinsically dependent on the wireless communications stratum. Microcosmic examples illustrate the severity of these issues, including:

University researchers gaining unauthorized access to a regional power grid's substation control network by exploiting proprietary wireless protocols using a Flipper Zero, enabling potential disruptive attacks against energy distribution infrastructure if vulnerabilities persisted without timely remediation.

A multi-university collaborative exposing systemic vulnerabilities across aviation asset tracking and logistics solutions due to a lack of encryption, authentication, or access controls on widely deployed wireless inventory management standards, creating intellectual property exfiltration and operational disruption risks.

Ethical hackers uncovering the ability to unlock car doors, start engines remotely, and bypass authorized driver authentication on 25 vehicle models by reverse engineering proprietary digital wireless entry systems and immobilizer controls through comprehensive RF protocol dissection and radio frequency firmware analysis.

Internal security assessments mapping over 7,000 medical Internet of Things devices across a hospital complex, all operating on misconfigured unencrypted wireless transports and directly exposing crucial patient health records, monitoring systems, and surgical technologies to remote compromise or malicious interference.

A manufacturing facility assessment proving the ability to gain unauthorized wireless control of robotic assembly lines through reverse engineering of proprietary industrial control radio protocols, enabling intellectual property theft and potential operational disruptions.

While these findings catalyzed systemic security hardening and vulnerability remediation activities, they simultaneously illuminate a sobering reality – conventional cybersecurity practices and governance frameworks have deeply under-prioritized wireless attack surface risk assessments and rigorous penetration testing validations. Without the explicit integration of next-generation radio frequency analysis and emulation capabilities distilled into platforms like Flipper Zero, these vulnerabilities would likely persist undetected until repeated real-world exploitation and losses manifested catastrophic consequences. This concerning dichotomy starkly underscores the ethical imperative driving legitimate wireless security research activities.

To strategically cultivate an ecosystem where technologies like the Flipper Zero responsibly bolster societal cyber resilience rather than enabling misuse, a paradigm shift towards establishing a model akin to the prestigious Michelin star rating system should be enacted. This world-renowned framework for culinary excellence fostered an environment where exceptional craftsmanship flourished through universal evaluative criteria, accredited institutions, and continuous iterative refinement - elevating the entire domain as a revered art form. Adopting this ethos across the security sector would establish:

With robust infosec, governance, and institutional oversight programs receiving renewable "star" accreditations in alignment with demonstrated abilities to produce rigorous, high-quality security research while stringently adhering to coordinated disclosure practices, vulnerability handling protocols, and multi-stakeholder remediation facilitation across both private and public sector entities. These institutions would serve as trusted vectors operationalizing offensive cyber innovations while systematically elevating the defensive resilience posture.

Rather than suppressed by blanket prohibitions or mired in stifling regulatory bureaucracy, novel cyber offensive technologies and testing methodologies would undergo standardized third-party validation aligned with universally accepted Acceptable Usage Policies tailored for each capability class. Tiered accessibility models ranging from independent researchers up to national cyber defense functions would seamlessly integrate cutting-edge innovations on accelerated timelines. An open marketplace coupled with structured training curricula and olympic-style technology demonstrations anchor this ecosystem, fostering individual advancement while rapidly disseminating emergent innovation across stakeholders.

Institutionalized coordinating bodies establish trusted disclosure workflows and vulnerability intake triage mechanisms to facilitate multi-party remediation activities ahead of any vulnerability release. Stringent legal safeguards, incentive frameworks, peer accountability processes, and accreditation revocation consequences collectively ensure utmost transparency while entities systematically exhaust reasonable remediation escalations and stakeholder notification protocols. Any attempts to subvert disclosure guarantees catalyze severe commercial, civil, and criminal repercussions in proportion to the severity of the violation.

As accredited institutions build credibility through successive research quality and adherence to established principles, a positive feedback cycle unlocks unprecedented transparency and collaboration with technology manufacturers, infrastructure operators, standards bodies, and regulatory agencies. Proprietary systems can receive comprehensive integrity validations while incentive programs reward organizations embracing proactive vulnerability research against products prior to general availability releases.

Structured peer review and reciprocal assessment processes foster an environment of perpetual improvement across the entire wireless security research ecosystem. Guilds and credentialing authorities elevate professional standards while "Star" renewal processes incorporate evolving best practice benchmarks. This continuous refinement posture collectively raises the skills, discipline, and sophistication of offensive research activities - forging a self-sustaining cycle enhancing societal resilience.

In this sustainable framework, technologies like the Flipper Zero transform from controversial symbols of potential misuse into catalytic forces bolstering our collective cyber resilience through the rigorous application of the ethical hacking mindset. The device does not inherently create vulnerabilities, but strategically illuminates systemic weaknesses before consequences manifest - accelerating our ability to defend harder through responsible coordinated remediation activities.

As digital innovation incessantly expands the wireless attack surface, we must resist the urges of shortsighted prohibition and fear. Instead, security leaders should embrace a future where offensive cyber research capacities are transparently cultivated and their impacts channeled into curated resilience initiatives. This paradigm shift positions the ethical hackers and white hat communities not as subversive threats, but the vanguard warriors whose very purpose centers on comprehensively identifying emergent vulnerabilities so we can systematically fortify against compromise before catastrophe strikes.

History has borne witness to the perpetual adversarial dynamics underpinning the perpetual battle between offensive and defensive entities - this fundamental truth is immutable. Suppressing pioneering innovations like the Flipper Zero only serves to distort this balance by ceding strategic advantages to those operating in the shadows, unencumbered by the principled governance regimes that can responsibly harness these technologies as catalysts for societal good. Rather than recoiling from the destructive potential of cyber offensives, we must strategically assess and ethically develop capabilities like the Flipper Zero within transparent frameworks fostering rigorous multi-stakeholder collaboration.

Only through this path can the ethical hackers of the world fully execute their mandates as sentinels illuminating the digital domain's darkest crevices. For it is in those shadows where the gravest undetected vulnerabilities inevitably loom, awaiting discovery before repeated exploitation manifests cyber catastrophe. Powerful technologies invariably carry risk, but their impacts hinge on the moral compass of those wielding the capabilities. The choice is one of prohibition and insecurity through suppression, or resilience cultivated through purposeful, principled action.

#06 🌿💨🇩🇪 | Germany’s cannabis legalization.

For decades, Germany's stance on cannabis embodied the harsh global prohibitionist mindset that took root in the early 20th century. The country solidified this approach through strict drug laws like the 1925 International Opium Convention and the 1971 Narcotics Act (Betäubungsmittelgesetz), which criminalized the unauthorized possession, sale and production of cannabis and other substances.

However, Germany's path towards legalizing adult-use recreational cannabis unfolded gradually over many decades, catalyzed by the intersection of multiple societal forces that steadily disrupted this draconian prohibitionist dogma. The anti-establishment youth countercultures of the 1960s catalyzed deeper questioning of these entrenched policies rooted in racist tropes, intergenerational moral panics around youth rebellion, and a lack of scientific understanding around cannabis's true properties and potential therapeutic benefits.

As the "reefer madness" propaganda myths lost credibility, German society witnessed the rising popularity of values favoring personal freedoms over government paternalism. This intersected with the nation's deeply-rooted Freikörperkultur philosophical embrace of naturalness detached from judgmental moralizing over individual lifestyle choices. Such perspectives steadily eroded the absolutist vilification of cannabis across subsequent generations.

While these countercultural movements did not immediately translate to policy changes, they created a socio-political climate more receptive to evidence-based policymaking over time. Landmark events accelerated this trajectory, such as Germany's rescheduling of synthetic THC compound dronabinol in the 1990s to facilitate medical research - a crucial first breach of prohibitionist dogma.

The pivotal turning point arrived in 2017 when Germany fully legalized cannabis for medical use amid mounting research validating its therapeutic efficacy. This breakthrough reflected decades of advocacy from medical professionals, policymakers like Burkhard Blienert and patient organizations highlighting cannabis's legitimate clinical applications and the human rights issues surrounding patient access.

The 2017 medical cannabis law paved the way for full adult-use legalization in 2024 through the Cannabis Control Act (Cannabiskontrollgesetz). This historic legislation allowed German adults to legally possess and cultivate cannabis for personal use while also permitting establishment of non-profit "cannabis social clubs" for community cultivation and distribution - reflecting Germany's cautious, public health-focused approach inspired by models in Spain and Uruguay.

Economic factors also underscored the rationality of legalization. Analytical projections from groups like the German Cannabis Association estimated legal reforms could save over €2 billion annually in law enforcement costs while generating €4.7 billion in tax revenues to fund drug education, treatment and other public services. This harmonized with public health priorities centered on harm reduction by regulating cannabis to undercut hazards like unsafe black markets and overcriminalization's disproportionate community impacts.

The 2024 law carefully structured a regulatory framework allowing licensed commercial cannabis production and sales alongside the non-profit model. It instituted quality control standards, advertising limits, taxation policies and age limits of 18 for possession and 21 for purchases - reflecting policymakers' balance between personal freedoms and environmental protections.

While no legislation perfectly resolves all concerns, Germany's incremental approach built on medical cannabis integration and global policy examples. It reflected hard-won acknowledgments from decades of prohibition's shortcomings in curbing recreational use while catalyzing a range of societal tolls through overcriminalization and empowered illicit markets.

Above all, Germany's path from prohibition to legalization manifested how drug policies reshape themselves amidst the churn of societal progress. As demographics shifted and new generations adopted more pragmatic perspectives around cannabis, the electorate and political classes grew receptive to reevaluating the substance through scientific lenses - prioritizing evidence over anecdotal propaganda, personal freedoms over paternalism, and public health-centered regulation over criminalization's cyclical failures.

The 2024 reforms codified how evidence-based policies continually evolve to realign with modern realities as humanity's socio-cultural progress rejects dogmatic inertia in favor of harm reduction, therapeutic compassion and individual liberties. Germany's journey exemplified the longstanding dynamic where evolving societal perspectives disrupt antiquated norms - a pattern likely to recur on myriad issues as human societies' values and empirical consensus render past legislative codes obsolete.

Germany provides a principled model for other nations to understand how pragmatic policymaking can bend towards societal enlightenment over generational timescales. While such seismic shifts take time, Germany's pioneering path integrated medical cannabis, navigated demographic changes, elevated civil society advocacy, and centered on responsible regulation and public trust-building.

The road ahead will require further refinement and vigilance. But modern Germany has institutionalized how persevering open discourse, ethical scrutiny of outdated dogmas, and faith in citizens' judgment can ultimately steer drug policies towards greater coherence with human rights, freedoms and the public good. In doing so, Germany has forged a pioneering policy framework for the world to study, debate and build upon.

#07 🤖🔧🌐 | autonomous maintenance tech.

Autonomous maintenance technology represents a paradigm shift in how we approach infrastructure upkeep, public space management, and urban maintenance. By harnessing the power of robotics, artificial intelligence, and decentralized autonomous organizations (DAOs), we can unlock unprecedented levels of efficiency, sustainability, and global collaboration.

At the core of this revolution lies the integration of autonomous systems with DAOs, enabling transparent coordination, open-source collaboration, and the development of robust, interoperable solutions tailored to the unique needs of communities worldwide.

DAOs offer a groundbreaking approach to maintaining and repairing critical urban infrastructure such as bridges, roads, and buildings. These organizations could facilitate the creation of decentralized networks of autonomous maintenance robots and drones, enabling a collaborative and data-driven approach to monitoring and addressing infrastructure challenges.

For example, a DAO could coordinate a fleet of autonomous drones equipped with advanced sensors, computer vision algorithms, and machine learning capabilities to inspect infrastructure across multiple cities or countries. Through community governance and decentralized decision-making, the DAO could allocate resources, schedule inspections, and prioritize repairs based on real-time data, community input, and predictive analytics, ensuring critical tasks are addressed promptly and efficiently.

A potential real-world example is the Drones for Bridges initiative by the U.S. National Institute of Standards and Technology (NIST). This program aims to develop standardized methods for using drones to inspect and assess the condition of bridges, paving the way for more widespread adoption of autonomous maintenance technologies in this critical domain. By integrating with a DAO model, such initiatives could be scaled globally, leveraging the collective expertise and resources of a decentralized network to address infrastructure maintenance challenges in both developed and developing regions.

DAOs could revolutionize the management and maintenance of public spaces and parks worldwide by enabling decentralized networks of autonomous gardening robots, lawn mowers, and waste management systems. Community members could contribute their autonomous assets to the DAO's shared pool of resources, which would be efficiently allocated and utilized based on community needs, priorities, and real-time data.

For instance, a DAO focused on maintaining public parks could allow community members to contribute their autonomous lawn mowers or gardening robots. The DAO could then coordinate the deployment of these assets based on factors such as weather patterns, sensor data, and community feedback, ensuring optimal maintenance of public spaces while promoting sustainability and resource efficiency.

A notable example is Singapore's deployment of autonomous maintenance robots for tasks like cleaning public areas, monitoring infrastructure, and maintaining parks and gardens. Companies like Lionsbot and Enzer have developed these robots, equipped with advanced sensors, AI algorithms, and cloud-based monitoring systems, enabling efficient and cost-effective maintenance operations. By integrating such autonomous systems with a DAO model, cities worldwide could benefit from decentralized governance, community engagement, and transparent resource allocation.

DAOs could foster the development of open-source autonomous maintenance platforms, enabling global collaboration and knowledge sharing among developers, researchers, and enthusiasts. Through decentralized governance and incentive mechanisms, these DAOs could facilitate the creation of robust, secure, and interoperable autonomous systems that can be adapted and deployed across various contexts and industries.

A potential example is a DAO focused on developing open-source software and hardware for autonomous maintenance robots. By leveraging the collective expertise and resources of a global community, this DAO could accelerate the pace of innovation and drive the creation of cutting-edge solutions that address unique maintenance challenges faced by different regions or industries.

Furthermore, initiatives like the Dronecode Project, an open-source platform for developing autonomous drone software, exemplify the potential for global collaboration in this space. By integrating with a DAO model, such platforms could foster decentralized governance, incentivize contributions, and facilitate the development of robust, secure, and interoperable autonomous maintenance systems tailored to diverse global needs.

DAOs could play a pivotal role in integrating autonomous maintenance technology with other emerging technologies, such as 3D printing, advanced energy harvesting, and storage technologies. By fostering collaboration and decentralized decision-making, these DAOs could facilitate the development of innovative solutions that combine the strengths of various technologies, creating more efficient, sustainable, and adaptable autonomous maintenance systems.

For instance, a DAO focused on developing autonomous construction and repair robots could bring together experts from diverse fields, such as robotics, 3D printing, and energy storage. Through decentralized governance and community-driven innovation, this DAO could unlock new possibilities for sustainable and efficient construction and maintenance practices on a global scale.

A prime example is the ongoing research at the University of Southern California (USC), where researchers are developing autonomous construction robots capable of 3D printing structures and conducting repairs on-site. By integrating these capabilities with a DAO model, a decentralized network of autonomous construction and repair robots could be established, enabling efficient and sustainable maintenance practices across various industries and regions worldwide.

#08 🥣❄️👨🍳 | freeze dried soups.

The origins of freeze-drying can be traced back to the early 20th century when Jacques-Arsène d'Arsonval, a French biologist, pioneered the process of desiccation in 1906. However, it was not until the 1930s that freeze-drying gained traction as a preservation method for food products. During World War II, the process was further developed and utilized by the United States and allied forces to preserve blood plasma, penicillin, and other medical supplies for field use.

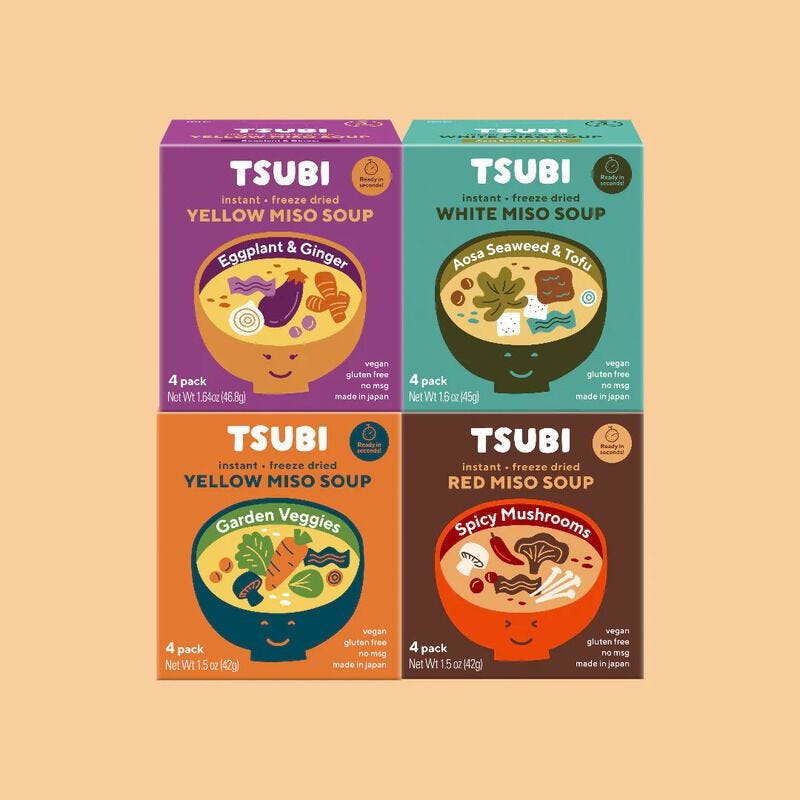

In the post-war era, the freeze-drying technology was adapted for commercial food production, initially focusing on coffee, tea, and soup mixes. One of the early pioneers in this field was the Nestlé company, which introduced its first freeze-dried soups under the Tonic brand in the late 1940s. These early freeze-dried soups were often criticized for their high sodium content and reliance on artificial preservatives to extend their shelf life.

The 1960s marked a significant turning point in the freeze-drying industry, with the advent of more advanced vacuum chambers and improved packaging techniques. Companies like Ralston Purina (now Nestlé Purina PetCare) and Freeze-Dry Foods (acquired by Nestlé in the 1970s) led the way in developing freeze-dried foods for both military and consumer markets.

One of the pioneers in freeze-drying technology was Dr. Roy Bingham, an American biochemist who founded Freeze-Dry Foods in 1963. Bingham's contributions included developing methods for freeze-drying meat, fruits, and vegetables while preserving their nutritional value and flavor. His work paved the way for more palatable and nutritious freeze-dried food products.

In the following decades, freeze-drying technology continued to evolve, with improvements in vacuum chambers, temperature control, and packaging materials. Companies like Mountain House, founded in 1963 in Oregon, became leaders in the outdoor recreation and emergency preparedness markets, offering a wide range of freeze-dried meals and soups.

A significant breakthrough in freeze-drying technology came in the 1990s with the development of microwave-assisted freeze-drying. This process, pioneered by researchers at the University of California, Davis, and the U.S. Army Natick Soldier Center, used microwave energy to accelerate the drying process while maintaining the quality and nutritional content of the food.

In recent years, the freeze-drying industry has seen a resurgence driven by consumer demand for convenient, shelf-stable, and nutritious food products. Companies like Harmony House Foods, Wise Company, and Thrive Life have emerged as major players in the emergency preparedness and long-term food storage markets, offering freeze-dried soups, meals, and ingredients with improved flavor profiles and reduced sodium levels.

Advancements in packaging technology have also played a crucial role in the evolution of freeze-dried soups. Companies now utilize multilayer pouches and cans with advanced barrier properties, ensuring longer shelf life and better protection against moisture, oxygen, and light. These packaging solutions have helped address the historical issue of high sodium levels and preservatives in freeze-dried soups.

Moreover, the rise of e-commerce and direct-to-consumer sales channels has facilitated the growth of smaller, artisanal freeze-drying companies catering to niche markets. These companies often emphasize the use of high-quality, locally sourced ingredients and offer a diverse range of freeze-dried soups and meals tailored to specific dietary preferences, such as vegan, gluten-free, or paleo-friendly options.

In terms of market opportunities, the freeze-dried food industry is expected to continue its growth trajectory, driven by increasing consumer demand for convenient, shelf-stable, and nutritious food options. The outdoor recreation and camping markets remain significant drivers, as freeze-dried meals and soups offer lightweight and easy-to-prepare options for hikers and backpackers.

Additionally, the emergency preparedness and long-term food storage markets are rapidly expanding, fueled by growing concerns about natural disasters, supply chain disruptions, and the desire for self-sufficiency. Freeze-dried soups and meals are well-suited for these applications due to their extended shelf life and ease of storage.

Furthermore, the rise of e-commerce has opened new avenues for freeze-dried food companies to reach consumers directly, bypassing traditional retail channels. This has enabled smaller, specialized companies to thrive and cater to niche markets with customized offerings.

Looking ahead, the freeze-drying industry is likely to continue innovating, with advancements in areas such as nutrient retention, flavor enhancement, and sustainable packaging solutions. Collaborations between food scientists, technologists, and manufacturers will be crucial in developing freeze-dried soups and meals that meet the evolving consumer demands for taste, convenience, and nutrition.

In conclusion, the journey of freeze-drying technology for soups and meals has been a fascinating one, spanning over a century of scientific advancements, technological breakthroughs, and evolving consumer preferences. From its humble beginnings as a preservation method for medical supplies during wartime to its current status as a cornerstone of the outdoor recreation and emergency preparedness markets, freeze-drying has transformed the way we think about shelf-stable, convenient, and nutritious food products. As the industry continues to innovate and adapt to changing consumer demands, the future of freeze-dried soups and meals remains bright, offering a wealth of opportunities for companies and entrepreneurs alike.

#09 👟🔄🌱 | open sourcing shoes.

Amid growing consumer consciousness and regulatory pressures, several pioneering frameworks have emerged, offering structured approaches to minimizing environmental impact and fostering responsible practices within the footwear sector.

Developed by the International Organization for Standardization (ISO) in 1996, the ISO 14001 standard provides a framework for organizations to implement an effective Environmental Management System (EMS). One of the early adopters, Nike, has leveraged this standard since the late 1990s to systematically manage its environmental responsibilities across its operations and sprawling supply chain.

According to Nike's annual impact reports, their ISO 14001-certified EMS has enabled them to identify and mitigate significant environmental impacts, ensuring compliance with legal requirements and setting targets for continuous improvement under the guidance of Hannah Jones, Nike's Vice President of Sustainable Business and Activism.

Developed in the late 1990s and updated in 2006, the Life Cycle Assessment (LCA) methodology, outlined in the ISO 14040 series of standards, offers a comprehensive approach to evaluating the environmental impacts of a product or service throughout its entire life cycle, from raw material extraction to disposal or recycling.

Nike's adoption of LCA studies, spearheaded by sustainability pioneer Sarah Severn, proved instrumental in identifying environmental hotspots within its supply chain. For instance, their LCA analysis in the early 2000s revealed that the manufacturing of synthetic rubber used in shoe soles contributed significantly to greenhouse gas emissions. This insight led to the development of Nike Grind, a material made from recycled shoes and manufacturing waste, now used in various products, including playgrounds and running tracks.